In collaboration with Guiding Eyes for the Blind, tech giant Google piloted a new Artificial Intelligence system named ‘’Project Guideline’’ in order to help people with visual loss run more independently without any guide dogs or human assistance.

Thomas Panek, CEO of Guiding Eyes for the Blind, attempted to run New York Runner’s Virtual Run for Thanks 5K in Central Park using Google’s new AI app which sent him audio signals by tracking the virtual race via GPS and his phone’s camera looking for pre-painted lines on the ground.

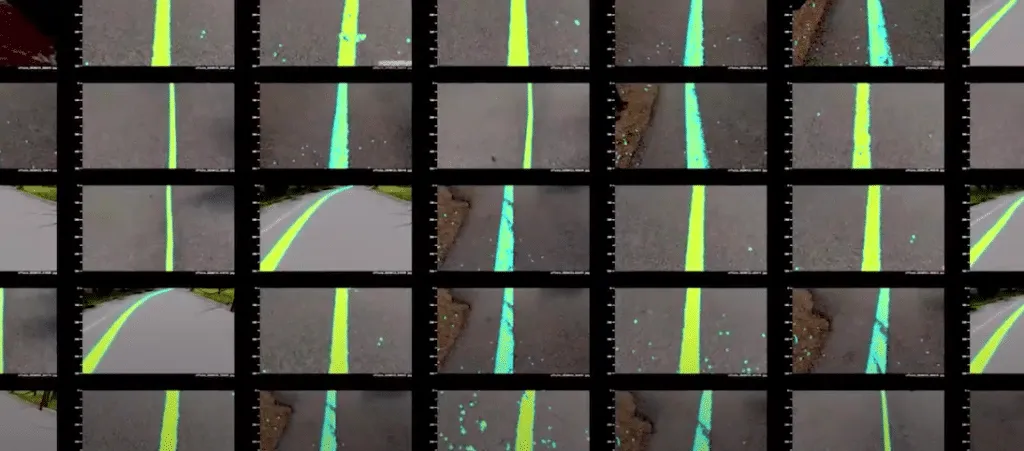

Google’s new AI system was designed to work without an internet connection and it only requires a pre-painted guideline on the ground to give users audio feedback depending on their position.

Project Guideline will use the smartphone’s camera to trace a line on a route and send voices to its user with bone conduction headphones. So what is bone conduction? Bone conduction is the way sound reaches the hearing organs in the inner ear through the skull bones. If the running person gets too far from the center, the sound of the headset will increase in the direction he is moving away from.

You can click here to read more about how Project Guideline started and helped Thomas Panek.

‘’By sharing the story of how this project got started and how the tech works today, we hope to start new conversations with the larger blind and low-vision community about how, and if, this technology might be useful for them, too.’’ said Google. As we continue our research, we hope to gather feedback from more organizations and explore painting guidelines in their communities.’’

You can visit goo.gle/ProjectGuideline if you want to test the new system as an individual or collaborate with Google as part of an organization.

Comments

Loading…