Facebook announced on Tuesday that it has made major updates to its Automatic Alt Text (AAT) technology, which was introduced in 2016 to auto-generate photo descriptions with object recognition, to allow blind or visually impaired (BVI) users to have a better Newsfeed experience.

The improved AI now allows AAT to detect and identify 10 times more objects, meaning that now BVI users are 10 times more likely to have detailed photo descriptions.

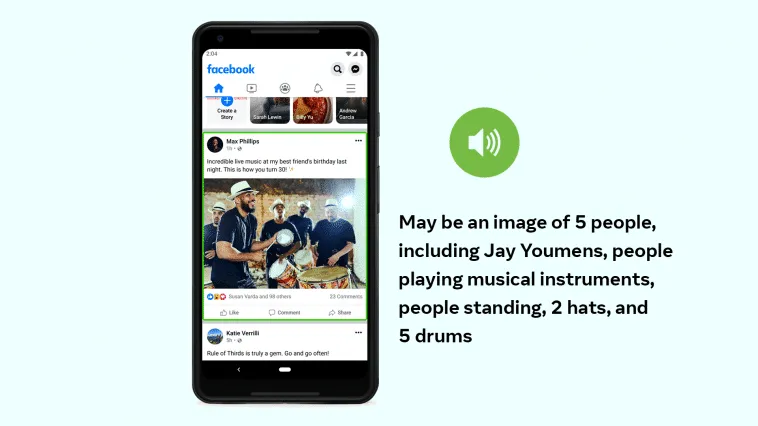

The photo descriptions are also now more detailed with the AAT’s ability to identify the composition of the image including the number of people in the photo. — for example, “May be a selfie of 2 people, outdoors, the Leaning Tower of Pisa.”

“Taken together, these advancements help users who are blind or visually impaired better understand what’s in photos posted by their family and friends — and in their own photos — by providing more (and more detailed) information.” the company said in the blog post.

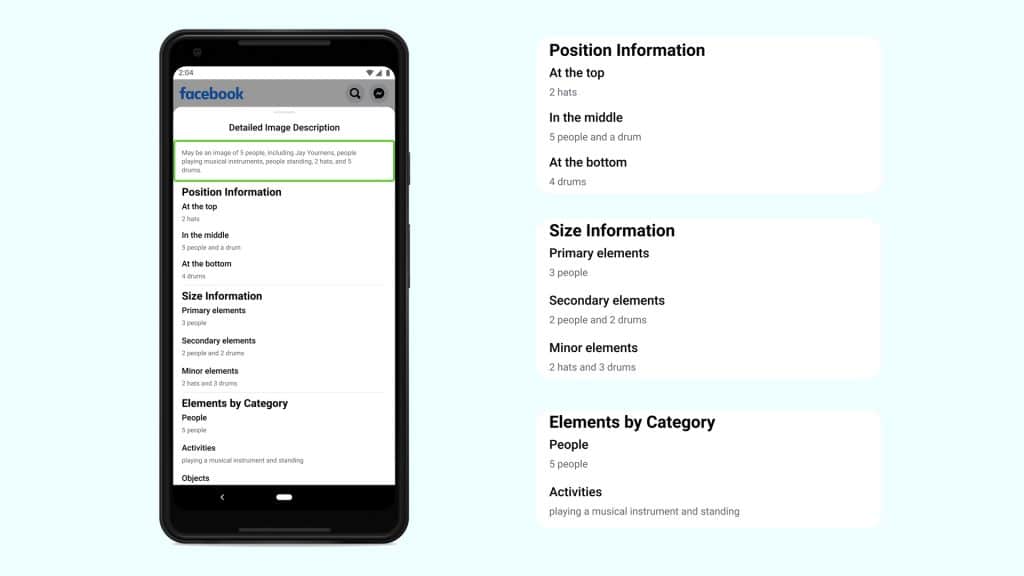

The latest AAT iteration also provides a panel that gives details about the photo’s contents including personal information, count of elements, and more. “Detailed descriptions also include simple positional information — top/middle/bottom or left/center/right — and a comparison of the relative prominence of objects, described as “primary,” “secondary,” or “minor.” These words were specifically chosen to minimize ambiguity. Feedback on this feature during development showed that using a word like “big” to describe an object could be confusing because it’s unclear whether the reference is to its actual size or its size relative to other objects in an image. Even a Chihuahua looks large if it’s photographed up close!”

iOS users can access the photo descriptions by using the new custom actions through VoiceOver, while Android users can see descriptions by long-pressing the image. Apple is still rolling out the update for users.

Comments

Loading…